Editor's Note: This article, through a large number of unpublished documents and in-depth interviews, revisits the internal crisis within OpenAI regarding Sam Altman's power and trust. From board ousting to a rapid reappointment in a "coup-like reversal," this turmoil was not a one-off event but a concentrated eruption of long-standing governance conflicts.

At the core of the conflict is a continued tug-of-war between two sets of logics: on one side, the nonprofit mission of OpenAI founded on "human safety first," and on the other side, a gradual shift towards a development path oriented towards products, scale, and revenue as AGI approaches and commercialization accelerates. In this process, safety commitments have been continuously weakened, and power and decision-making have gradually centralized among a few.

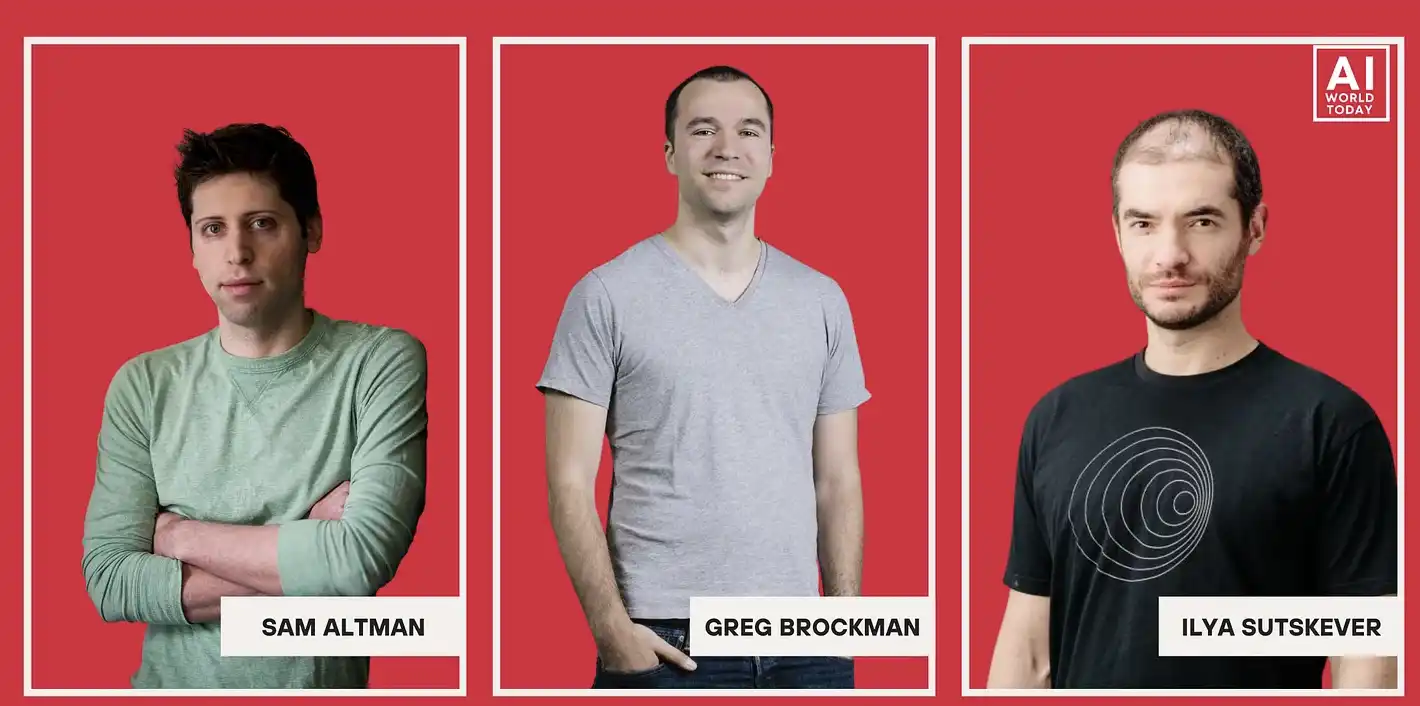

Many key figures, including Ilya Sutskever and Dario Amodei, have questioned Altman, focusing on information opacity and strategic expression, believing that his leadership style is insufficient to robustly govern the technology that "will change the destiny of humanity"; his supporters, however, emphasize that his ability in resource integration, capital operation, and execution is key to OpenAI's rapid expansion.

When technological power is sufficient to influence global order, is the existing corporate governance structure still sufficient to constrain individuals? In other words, in the AI era, the true uncertainty may not only come from the technology itself but from those who control the technology.

Below is the original article:

Power and Trust: Governance Fissures Under Altman's Leadership

In the fall of 2023, OpenAI's Chief Scientist Ilya Sutskever sent a secret memo to the company's other three board members. In the preceding weeks, they had been privately discussing a sensitive issue: whether company CEO Sam Altman and his deputy Greg Brockman were still fit to continue leading the company.

Sutskever once considered the two of them friends. In 2019, he even hosted Brockman's wedding at the OpenAI office, where there was even a robotic arm acting as the "ring bearer."

But as he grew increasingly convinced that the company was nearing its long-term goal — creating an artificial intelligence that could match or surpass human cognitive capabilities — his doubts about Altman deepened. As he put it to another board member at the time, "I don't think Sam is the person who should have their finger on the button."

At the request of other board members, Sutskever, along with like-minded colleagues, put together a document of around seventy pages, including Slack chat logs, HR files, and accompanying commentary. Some of the content was even screenshots taken with a phone, seemingly to avoid monitoring by company devices. He eventually sent these memos to other board members in a "read-and-burn" fashion to ensure they wouldn't be seen by more people.

"He was really scared at the time," recalled one director who received the materials. We reviewed these memos, which had never been fully disclosed before. The documents accused Altman of distorting facts to executives and board members and of deceptive behavior around internal security protocols. One memo about Altman started with a list of items titled "Sam has consistently shown…," with the first item being "Lying."

Many tech companies claim to "make the world a better place," but their actual operations revolve around maximizing revenue. The founding principle of OpenAI, however, was meant to be different from this model. Its founders, including Altman, Sutskever, Brockman, and Elon Musk, believed that artificial intelligence could be one of the most powerful and potentially dangerous inventions in human history. Against the backdrop of this "existential risk," the company may require an unconventional organizational structure.

OpenAI was initially established as a non-profit organization, with its board tasked with placing "the safety of all humankind" above the company's success, even prioritizing it over the company's own survival. The CEO would need to possess extraordinary character and ethics.

As Sutskever put it, "Anyone involved in building this technology that could change civilization as we know it carries a heavy responsibility and an unprecedented duty." But he also noted that "those who end up in these positions are often a certain type — people hungry for power, political types, or those who just enjoy power for its own sake." In one memo, he expressed concern about entrusting this technology to someone "who only says what others want to hear."

If OpenAI's CEO is ultimately found to be unreliable, then this six-person board of directors has the power to dismiss him. Some directors, including AI policy expert Helen Toner and entrepreneur Tasha McCauley, became more convinced of their previous judgment after reading these memos: what Altman assumed was a responsibility concerning the future of humanity, but he himself was not trustworthy.

Boardroom Coup: Sam Altman Fired

At the time, Sam Altman was in Las Vegas watching a Formula One race when Ilya Sutskever invited him to a video call with the board of directors and read a brief statement announcing that he was no longer an employee of OpenAI. The board, under legal advice, issued a public statement, stating that Altman was dismissed for "failing to consistently maintain honesty in communication."

This decision shocked many of OpenAI's investors and executives. Microsoft, which had invested about $13 billion in OpenAI, also learned of the news at the last minute before the decision was executed. "I was incredibly surprised at the time," Microsoft CEO Satya Nadella recalled later. "I couldn't get more information from anyone." He then contacted Reid Hoffman (LinkedIn co-founder, OpenAI investor, and Microsoft director), who began inquiring everywhere whether Altman had committed any clear wrongdoing. "I was totally confused at the time," Hoffman told us. "We were looking for issues like embezzlement or harassment, but I didn't find anything."

Other business partners were similarly caught off guard. When Altman called investor Ron Conway to inform him of his dismissal, Conway was having lunch with US Congresswoman Nancy Pelosi, and he handed her the phone on the spot. "You better leave here quickly," she told Conway.

Meanwhile, OpenAI was close to completing a major financing round from venture capital firm Thrive Capital, founded by Josh Kushner, also the brother of Jared Kushner, whom Altman had known for many years. This deal would have valued OpenAI at $86 billion and allowed many employees to cash out millions of dollars in stock benefits. Kushner had just finished a meeting with music producer Rick Rubin when he saw Altman's missed call and returned it. "We immediately went into battle mode," he later recalled.

On the day of his firing, Altman flew back to his $27 million mansion in San Francisco, overlooking the entire bay with a cantilevered infinity pool, where he set up a temporary command center he called a "sort of exiled government." Conway, Airbnb co-founder Brian Chesky, and the famously combative crisis PR handler Chris Lehane joined in via video and phone, sometimes talking for hours on end. Some of Altman's executive team even camped out in the corridors of the house. Lawyers stationed themselves in his home office next to the bedroom. During bouts of insomnia, Altman would pace back and forth in his pajamas. Describing this post-firing experience in a recent interview, he called it "a strange kind of fugue state."

With the board remaining silent, Altman's advisory team began building a narrative for his return. Lehane insisted that the firing was actually a "coup" orchestrated by "effective altruists," advocates of a thought system emphasizing the maximization of overall human well-being, who saw artificial intelligence as an existential threat. (Hoffman also suggested to Nadella at the time that the firing might be due to "some kind of effective altruistic madness.") Lehane, whose widely circulated motto borrowed from Mike Tyson, "Everyone has a plan until they get punched in the face," suggested Altman launch a bold social media offensive. Chesky stayed in touch with tech journalist Kara Swisher, constantly echoing criticism of the board to the outside world.

Every evening at six o'clock, Altman would emerge from his "war room" to pour himself a Negroni. He recalled telling those around him, "You have to relax; what's meant to happen will happen." However, he added that call logs showed he was on the phone for over twelve hours a day during that time.

At one point, a source revealed, Altman told Mira Murati, who was then serving as OpenAI's interim CEO, that his allies were "going all out" and attempting to "dig up dirt" to tarnish her and others involved in pushing for his ouster. (Altman himself claims not to recall this conversation.)

Within hours of his firing, Thrive Capital had paused its planned investment and made it clear that only if Sam Altman returned would the deal go through and employees would reap their equity rewards. Text message records at the time showed Altman was in highly frequent communication with Satya Nadella. (In crafting a joint statement, Altman suggested wording it as, "Satya and my top priority right now is to save OpenAI," while Nadella proposed a different phrasing: "Ensure OpenAI continues to thrive.")

Power Reversal: Altman Reinstated Swiftly After 5 Days

Shortly after, Microsoft announced that they would launch a competitive project for Altman and any OpenAI employees who left. At the same time, a public letter requesting Altman's return began circulating within the company. Some who were initially hesitant to sign received pleading calls and messages from colleagues. Ultimately, the majority of OpenAI employees threatened a collective resignation in support of Altman.

The board was backed into a corner. "Control Z, that's an option," Helen Toner said — referring to undoing the firing decision. "The other option is total collapse." Even then-interim CEO Mira Murati eventually signed the public letter. Altman's allies began trying to persuade Ilya Sutskever to change his stance. Brockman's wife, Anna, even approached him in the office and said, "You're a good person, you can make this right." Sutskever later explained in a court testimony, "I felt at the time that if we went down the path of Sam not returning, OpenAI would be destroyed."

One night, Altman took the sleeping pill Ambien and was awoken by his husband, Australian programmer Oliver Mulherin, who told him that Sutskever's position was wavering and suggested Altman communicate with the board immediately. "I woke up in a kind of Ambien-induced haze," Altman recalled, "and I was just totally disoriented and thought, 'There's no way I can talk to the board right now.'"

In a series of increasingly tense calls, Sam Altman demanded the resignation of the board members who had pushed for his firing. Reflecting on the idea of returning, he remembered his initial reaction as, "I'm going to clean up their mess in this incredibly suspicious environment?" "I thought, absolutely not," he said. Eventually, Ilya Sutskever, Helen Toner, and Tasha McCauley lost their board seats, leaving only Adam D'Angelo (Quora co-founder) as the original board member remaining.

As part of the resignation terms, these board members requested an investigation into the charges against Altman — including creating divisions among executives and concealing financial ties. They also pushed for the establishment of a new board to independently oversee the external investigation. However, the two new directors appointed — former Harvard University president Lawrence Summers and former Facebook CTO Bret Taylor — were selected after close communication with Altman. "Do you think this works," Altman texted Satya Nadella, "with Bret, Larry Summers, and Adam on the board, me as CEO, and Bret leading the investigation?" (McCauuley later testified that when Taylor was being considered for the board, she was concerned he might be too deferential to Altman.)

Less than five days after being fired, Altman was reinstated. Company employees would later refer to this period as the "Blip," drawing on a plot point from a Marvel movie — a brief disappearance followed by a return, but the world had been fundamentally altered by their absence.

However, the controversy surrounding Altman's trustworthiness had long since spilled beyond the OpenAI boardroom. The colleague who had engineered his ouster accused him of pervasive misleading behavior, which would be unacceptable for any corporate executive, let alone for a leader in possession of such transformative technology. "We need checks on power that are commensurate with that power," Mira Murati told us. "The board had asked for feedback, and I just shared honestly what I saw, and I stand by that." Altman's supporters, on the other hand, had long downplayed these allegations. Following the firing, investor Ron Conway had messaged Brian Chesky and Chris Lehane, urging a PR counterattack: "This is about Sam's reputation." He also told The Washington Post that Altman was being treated unfairly by "a board that's out of control."

Since then, OpenAI has ascended to become one of the world's most valuable companies, reportedly preparing for a potentially trillion-dollar IPO. Meanwhile, Altman is driving a massive artificial intelligence infrastructure buildout, with some efforts extending into authoritarian regimes abroad. OpenAI is also pursuing large government contracts and gradually setting standards for AI applications in immigration enforcement, domestic surveillance, and autonomous weapons in conflict zones.

Sam Altman has steered OpenAI's growth by continuously painting a grand vision of the future. In a 2024 blog post, he wrote, "The breathtaking victories — solving climate change, establishing off-world colonies, uncovering all physical laws — will all eventually become mundane." This narrative underpins one of the fastest-burning startups in history, heavily reliant on funding from highly leveraged partners. The U.S. economy is increasingly dependent on a handful of high-leverage AI companies, with many experts — including Altman himself at times — warning of a bubble risk in the industry. "Someone is going to lose a lot of money," he told reporters last year. If the bubble bursts, it could trigger an economic catastrophe; yet if his rosiest predictions come true, he could also become one of the wealthiest and most powerful individuals globally.

In a tense call following Altman's firing, the board demanded that he acknowledge a pattern of misleading behavior. According to sources present, he repeatedly said, "This is ridiculous," and stated, "I can't change who I am." Altman later claimed no memory of that conversation. "I think maybe what I was trying to say was more like, 'I've always tried to be a unifier,'" he explained to us later, attributing his success in leading an incredibly successful company to this trait. He chalked up these criticisms to a tendency in his early career to "avoid conflict too much." A board member, however, offered a starkly different interpretation: "What he really meant was — 'I have this thing where I will lie to people, and I won't stop.'"

Mission Drift: From Security-First to Business-First

And so, a more fundamental question emerged: Were those colleagues pushing for his ouster acting out of overzealousness and personal animus, or was their judgment sound, and Altman truly untrustworthy?

One winter morning this year, we met Altman at OpenAI’s headquarters in San Francisco, one of more than a dozen conversations we conducted for this article. The company had recently moved into two eleven-story glass towers, one of which had previously housed another tech giant, Uber. Uber’s co-founder and former CEO Travis Kalanick had been seen as an unstoppable genius entrepreneur until he was pushed out in 2017, also over ethical issues. (Kalanick now runs a robotics startup; he has said that in his spare time, he uses OpenAI’s ChatGPT to “explore the frontiers of quantum physics.”)

An employee gave us a tour of the office space. In an area filled with shared long tables and natural light, there was a dynamic digital painting of computer scientist Alan Turing, whose eyes followed us as we moved. The installation was clearly a nod to the “Turing Test”—the 1950 thought experiment to determine whether a machine can convincingly mimic a human. (In a study in 2025, ChatGPT’s performance on this test actually surpassed that of real humans.) Normally, the painting was interactive. But our guide explained that its voice feature had been disabled because it was constantly “eavesdropping” on conversations among employees and interjecting frequently. Elsewhere in the office, signs reading “Feel the AGI” were visible—a slogan originally coined by Ilya Sutskever to remind colleagues of the risks of advanced artificial general intelligence (the point at which machines achieve human-level cognitive ability). Post-Blip, it had evolved into a cheerful catchphrase celebrating a prosperous future.

In a nondescript conference room on the eighth floor, we found Sam Altman. “I used to hear people talk about decision fatigue, and I never got it,” he said. “Now I wear a gray sweater and jeans every day, and even picking which gray sweater out of the closet, I’m like, I wish I didn’t have to make this decision.”

Altman looked perpetually youthful, with a lean frame, widely spaced blue eyes, slightly tousled hair, but he was forty. He and Oliver Mulherin have a one-year-old son through surrogacy. “I think, being President of the United States is certainly more pressure, but of all the jobs I think I could realistically do, this is the one I could imagine being the most pressure,” he said, looking at one of us, then the other. “I’ve described it to friends this way: ‘It’s the most interesting job in the world until the day we launched ChatGPT.’ Before that, we were making these giant scientific breakthroughs—I thought it was one of the most important scientific discoveries in decades.” He looked down. “But since ChatGPT launched, all decisions have become very hard.”

Altman grew up in Clayton, Missouri, an affluent suburb of St. Louis, as the oldest of four children. His mother, Connie Gibstine, is a dermatologist, and his father, Jerry Altman, was a real estate agent who also worked on housing initiatives. He was raised in a Reform Jewish temple, attended a private preparatory school, and later described it as "not an easy place to come out as gay." However, overall, the upper-middle-class community he was in was relatively liberal.

Around the age of sixteen or seventeen, he experienced a serious physical assault and homophobic insults while out at night in a gay-centric neighborhood in St. Louis. Altman did not report the incident and was unwilling to provide more details, stating that a fuller account "would make me look like I was manipulating people or seeking sympathy." He downplayed this experience and the importance of his sexual orientation to his identity. But he also acknowledged, "There is probably something very deep here psychologically, about feeling like I had come out but hadn't actually—to avoid more conflict."

His brother described his childhood personality as "I have to win, and I have to control everything," in an interview with The New Yorker in 2016. Altman later attended Stanford University and frequently participated in off-campus poker games. "I feel like I learned more about life and business there than I did in college."

Y Combinator Era: Hyperbole and Trust Controversy

Stanford students are ambitious, but the most action-oriented among them often choose to drop out. At the end of his sophomore year, Altman headed to Massachusetts to join the first group of entrepreneurs in the Y Combinator startup incubator program. The institution was co-founded by renowned software engineer Paul Graham. Each participant joined with a startup idea. (Among those in his batch were the teams that later founded Reddit and Twitch.) Altman's project was later named Loopt, a early social networking product that allowed friends to see each other's whereabouts by tracking the location of their flipped phones. The company showcased both his executional abilities and his tendency to carve out space for himself in gray areas. At the time, federal regulations required carriers to be able to locate a phone's position in emergency situations, and Altman struck deals with carriers to incorporate this capability into his product.

Most of the employees during the Loopt era liked Sam Altman, but some were struck by his tendency to "exaggerate" and even on some trivial matters. Some recalled Altman boasting about being a ping-pong champion "like Missouri high school ping-pong champion" only to be one of the worst players in the company. (Altman said it was probably just a joke.) An older Loopt employee, Mark Jacobstein, was appointed by investors to serve as Altman's "caretaker," and he later commented in Keach Hagey's biography, "The Optimist": "There is a gray area between 'I think I might be able to do this' and 'I have done this,' and in its most extreme form, this vagueness can lead to outcomes like Theranos's."

According to Hagey, due to concerns about Altman's leadership style and lack of transparency, some senior employees of Loopt suggested twice to the board to remove him from the CEO position. However, at the same time, he also had a strong personal appeal. A former employee recalled that a board member directly responded, "This is Sam's company, go back to do your job." (However, some board members also denied that these removal attempts were serious.)

Loopt never saw improvement in user growth and was eventually acquired by a fintech company in 2012. According to insiders, this acquisition was largely to help Altman "exit gracefully." However, by 2014, when Paul Graham retired from Y Combinator, he still chose Altman as his successor. "I asked him in my own kitchen," Graham told The New Yorker, "He smiled as if everything had fallen into place. I've never seen Sam's uncontrollable smile, like the one you show when you throw a paper ball far into the trash can."

The new position made the then 28-year-old Altman a "kingmaker." His job was to screen the most ambitious, most promising entrepreneurs, connect them with top programmers and investors, and help them build industry-leading monopoly companies (meanwhile, Y.C. would take 6% to 7% equity).

Under his leadership, Y Combinator expanded rapidly, with the number of incubated projects growing from dozens to hundreds. But some Silicon Valley investors began to believe that his interests were not completely aligned. An investor told us that Altman would "selectively personally invest in the highest-quality companies to exclude external investors" (Altman denied this claim). He also acted as a scout for Sequoia Capital, participating in early-stage project investments and receiving some returns.

According to sources, when Altman invested in the fintech company Stripe as an angel investor, he insisted on obtaining a higher percentage of shares, a practice that displeased Sequoia internally. The source commented that this reflected a "Sam-first" strategy. (Altman denied this claim. He invested around $15,000 in Stripe around 2010, holding about 2% of the shares, and the company is now valued at over $150 billion.) Altman claimed to have invested in about 400 companies.

By 2018, several Y Combinator partners were dissatisfied with Altman's behavior and reported it to Graham. Subsequently, Graham had a candid conversation with his wife and Y.C. co-founder Jessica Livingston and Altman. Afterward, Graham began to publicly state that although Altman verbally agreed to leave, he did not actually step down in practice.

Altman informed some partners that he would resign as president but stay on as chairman. In May 2019, Y Combinator published a blog post announcing the new president, with a note stating, "Sam is transitioning to YC chairman." A few months later, this statement was revised to "Sam Altman has stepped down from any operational role at YC," and then this sentence was entirely removed. Nevertheless, as of 2021, Altman was still listed as the Chairman of Y Combinator in U.S. Securities and Exchange Commission filings. (Altman has said he only learned about this later.)

Sam Altman has consistently stated publicly over the years and in recent legal testimony that he was not fired from Y Combinator and told us he did not resist the departure. Paul Graham tweeted, "We didn't want him to leave but wanted him to pick between YC and OpenAI." In a statement, Graham also told us, "We don't have the legal power to fire anyone; all we can do is apply moral pressure."

However, in private, the story was more explicit—Altman's departure stemmed from a lack of trust from YC partners. This account of Altman's time at Y Combinator is based on interviews with multiple YC founders and partners and contemporaneous materials, all of which suggest that the parting was not entirely mutual. Graham even reportedly told YC colleagues in one internal discussion that "Sam had been lying to us all along before he was fired."

OpenAI's Mission Drift: From Safety-First to Profit-First

In May 2015, Altman emailed Elon Musk, then roughly the 100th wealthiest person globally. Like many Silicon Valley entrepreneurs, Musk was then heavily focused on a series of what he saw as "existential risks"—though most others viewed them as remote possibilities. "We need to be super careful with AI," he wrote on Twitter, "Potentially more dangerous than nukes."

Altman, who had long been a tech optimist, quickly shifted his tone on AI to a more apocalyptic one. In public remarks and private communications with Musk and others, he warned against allowing this technology to be monopolized by profit-driven tech giants. "I've been wondering if there's a way to stop the A.I arms race," he wrote, "but if there is no way, then it seems like it would be best to have the right company do it." Drawing on the nuclear analogy, he proposed establishing an "A.I. Manhattan Project." He further outlined the core tenets of this organization— "Safety must be the top priority"; "Certainly we should follow and be supportive of all regulation."

Subsequently, he and Musk settled on the name of the project: OpenAI.

Unlike the Manhattan Project, which was initially government-led and culminated in the creation of the atomic bomb, OpenAI would be privately funded in its early stages. Altman foresaw that once a form of "superintelligence" surpassing general artificial intelligence (AGI) emerged, it would generate enough economic value to "capture the future light cone of the universe." However, he also repeatedly emphasized its potential existential risks: at some point, the technology's impact on national security could be so significant that it might compel the U.S. government to take over OpenAI, even nationalize it, and relocate its facilities to a secure base in the desert. By the end of 2015, Musk had been convinced. "We should announce a $1 billion funding commitment," he wrote, "and I will back it if others don't."

Sam Altman initially placed OpenAI under Y Combinator's nonprofit arm and framed it as an internal philanthropic project. He allocated YC stock to members joining OpenAI and transferred donation funds through YC accounts. At one point, this lab even relied on support from a YC fund in which Altman had personal stakes. (Altman later referred to this equity stake as "negligible" and stated that the YC stock allocated to employees came from his personal holdings.)

The analogy to the "Manhattan Project" also manifested in the talent race. Similar to nuclear fission research, machine learning was then a small-scale scientific field with epoch-making potential impact, led by a small group of distinctly talented individuals. Elon Musk, Altman, and Greg Brockman, who joined from Stripe, all believed that truly breakthrough computer scientists were few and far between. Google, on the other hand, had a huge advantage in terms of funding and time. "We are vastly behind in manpower and resources, the gap is surreal," Musk later wrote in an email. But he also believed that "if we can continue to attract the best talent and ensure the right direction, OpenAI will still prevail."

One of the most important recruitment targets was Ilya Sutskever—an introverted, intense researcher often regarded as one of the most gifted AI scientists of his time. Born in the Soviet Union in 1986, Sutskever had a receding hairline, deep eyes, and a habit of pausing and staring contemplatively before speaking. Another key figure was Dario Amodei, a high-energy researcher with a background in biophysics who would often nervously run his hand through his black hair in moments of tension and reply with multi-paragraph essays to even single-sentence emails. Both were in high-paying positions at other companies at the time, but Altman invested significant effort in bringing them on board. He later joked, "I was practically 'stalking' Ilya."

While Musk may have the greater name recognition, Altman is the smoother operator. He took the initiative to email Amodei and set up a one-on-one meeting at an Indian restaurant. (Altman: "My Uber got into an accident! Might be 10 minutes late." Amodei: "Oh no, hope you're okay.") Like many AI researchers, Amodei believes this technology should only be developed once it's been shown to be "aligned" with human values—meaning it doesn't catastrophically deviate from human intent, such as wiping out humanity in the name of "cleaning the environment." Altman repeatedly echoed this safety concern in their conversation, instilling confidence.

Amodei, who later joined the company, spent years documenting Altman and Brockman's actions, compiling them into a document titled "My Experience at OpenAI" (subtitle: "Confidential: Do Not Distribute"). Over two hundred pages of files related to Amodei—including these notes, internal emails, and memos—circulated within Silicon Valley circles but had never been publicly disclosed. In these records, Amodei wrote that Altman's aim was to create "a safety-centric AI lab ('maybe not at first, but soon after')."

In December 2015, just hours before OpenAI's official announcement, Altman emailed Musk mentioning a rumor: Google was "going to come in with a huge reverse offer to everyone at OpenAI tomorrow, trying to kill the org outright." Musk asked, "Has Ilya given a definitive answer?" Altman replied saying Sutskever was resolute. Indeed, Google had offered Sutskever a $6 million annual salary, which OpenAI couldn't match. Yet Altman remained confident, stating, "Too bad they aren't on the 'do the right thing' side."

Elon Musk once provided office space for OpenAI in an old suitcase factory in San Francisco's Mission district. As Ilya Sutskever told us, the core rallying cry for employees at the time was, "You all are going to save the world." If all went well, the founders of OpenAI believed artificial intelligence would usher in a "post-scarcity" utopia: automating arduous labor, curing cancer, granting humans more leisure and abundance. But if the technology went rogue or fell into the wrong hands, the destruction could be total—such as being used to develop new bioweapons or advanced drone swarms; models might surpass human oversight, self-replicate on clandestine servers beyond shutdown; in extreme cases, they could even take control of the grid, stock market, or nuclear arsenal.

While not everyone agrees with these assessments, Sam Altman has repeatedly expressed his belief in this risk. In a 2015 blog post, he wrote that superhuman-level machine intelligence "doesn't need to be evil to destroy humanity, it could just be indifferent, as it goes about accomplishing other goals... casually wiping us out." The OpenAI co-founder pledged not to prioritize speed over safety, and the company's charter includes a legal commitment to "benefit all humanity." They are also wary that if AI becomes the most powerful technology ever, any single controller would gain unprecedented power — a scenario they refer to as "AGI dictatorship."

After Musk's departure, researchers like Dario Amodei began to express dissatisfaction with Greg Brockman's management style, with some finding him authoritarian, while Sutskever was described as "principled but lacking in organizational ability." During his transition to CEO, Altman seemed to make different commitments to various factions within the company. He assured some researchers that he would weaken Brockman's authority, but at the same time, he reached a "handshake agreement" with Brockman and Sutskever: he would serve as CEO, but would resign if both requested it. (Altman disputes this claim, stating he was invited to be CEO. The three acknowledge the existence of the agreement, with Brockman saying it was informal: "He unilaterally said that if the two of us asked, he would resign. We actually opposed this idea, but he said it was important to him and was done out of altruism.") Later, the board learned that the CEO had actually set up a "shadow board" for himself, which came as a shock.

Internal records show that the founding team had doubts about the nonprofit structure as early as 2017. That same year, after Musk attempted a takeover, Brockman wrote in a diary entry, "Can’t say we really stuck to being a nonprofit... If we become a B-Corp in three months, the previous statements were lies." Amodei also documented in early notes that he had asked Brockman about his top priorities, to which he answered "money and power." (Brockman denies this claim.) His diary also reveals a contradictory mindset: on one hand stating "if others are not rich, I don't care about being rich either," and on the other hand asking himself "what do I really want?" with one answer being "financially reaching $1 billion."

In 2017, Sutskever read a research paper from Google researchers in the office proposing a "new simple network architecture—the Transformer." He jumped out of his chair, ran into the hallway, and shouted, "Stop everything you're doing, this is the answer." To him, this architecture would enable OpenAI to train more complex models. This breakthrough led to the earliest generative pre-trained Transformer models and served as the foundation for ChatGPT later on.

Altman had previously committed to early employees that OpenAI would always remain pure in its nonprofit nature, leading many programmers to accept significant pay cuts to join the company. OpenAI also received around $30 million in donations, including from the Open Philanthropy organization, a key funding hub in the effective altruism movement, which has long supported projects such as distributing mosquito nets to impoverished areas.

Day-to-day operations were mainly overseen by Brockman and Sutskever, while Musk and Altman remained busy with their respective other ventures, typically visiting the company once a week. By September 2017, Musk was growing impatient. As discussions arose about potentially transitioning OpenAI into a for-profit company, he requested majority control.

Altman's responses varied on different occasions, but one thing he consistently insisted on was that if the company were to restructure under a CEO, that position should be held by him. Sutskever was notably uneasy about this. On behalf of himself and Brockman, he sent a lengthy email to Musk and Altman titled "Honest Thoughts," stating, "The goal of OpenAI is to make the future better and avoid AGI dictatorship." Addressing Musk, he wrote, "Therefore, setting up a structure that could make you a dictator is a bad idea." He also expressed similar concerns to Altman, stating, "We fail to understand why the CEO title is so crucial to you. Your reasons keep changing, making it hard for us to see the true motivation."

"Gentlemen, I am done," Musk replied, "Either do something else on your own or continue to maintain OpenAI's nonprofit status—otherwise, I am just funding you for free to run a startup company." Five months later, he left with visible dissatisfaction. (In 2023, he founded the for-profit competitor xAI. The following year, he sued Altman and OpenAI for fraud and violation of charitable trust, alleging he was "carefully manipulated" and that Altman engaged in a "long con" using his concerns about AI risk to secure funding. The lawsuit is ongoing, with OpenAI strongly refuting the claims.)

As technological capabilities advanced, we learned that around a dozen core engineers at OpenAI had held a series of secret meetings, privately discussing whether the founding team, including Sam Altman and Greg Brockman, could be trusted. During one of these meetings, an employee recalled a satirical sketch by the British comedy duo Mitchell and Webb—an Eastern front Nazi soldier suddenly has an epiphany, asking, "Are we the baddies?"

By 2018, Dario Amodei had begun to publicly question the founders' motives. He later wrote in a note: "Everything looks like a never-ending financing scheme. I feel that what OpenAI really needs is a clear definition: what it wants to do, what it doesn't want to do, and how its existence will make the world better." Despite the company already having a mission statement — "to ensure that artificial general intelligence benefits all of humanity" — Amodei felt that this statement was not clear to the executive team.

In early 2018, he started drafting the company's charter and, after weeks of discussion with Altman and Brockman, pushed to include one of the most radical clauses: if a "value-aligned and safety-conscious project" was closer to achieving AGI than OpenAI, the company would "cease competitiveness and instead assist that project." This was known as the "merge and assist" clause — for example, if Google were to achieve safe AGI first, OpenAI would theoretically dissolve itself and transfer resources to Google. From a traditional business perspective, this commitment was almost unthinkable, but OpenAI never intended to become a traditional company.

This premise faced a reality check in the spring of 2019. At that time, OpenAI was in talks with Microsoft for a potential investment of up to $1 billion. Although Amodei (then leading the safety team) had been involved in pitching the project to Bill Gates, there was still anxiety within the team that Microsoft might introduce terms that would dilute OpenAI's ethical commitments. Amodei submitted a prioritized list of safety requirements to Altman, with the "merge and assist" clause at the top.

Altman agreed at the time. However, as the deal neared completion in June, Amodei discovered a new clause in the agreement giving Microsoft the power to veto OpenAI's merging. "This amounted to an 80% breach of the charter," he later recalled. He confronted Altman about this, with Altman initially denying the existence of such a clause. Amodei read out the contract verbatim on the spot, eventually having to have another colleague confirm directly with Altman. (Altman stated that he doesn't remember this incident.)

Safety Exodus and the Birth of Anthropic

Amodei's notes also documented a series of escalating tense conflicts. At a meeting several months later, Altman summoned him and his sister Daniela, who also worked on safety and policy at the company, claiming that he had received reliable information from a "senior executive" that the two were planning a "coup." The notes stated that Daniela became "emotionally overwhelmed" on the spot and called the executive over, who then denied ever saying such a thing. Sources familiar with the matter recalled that Altman later denied ever making this accusation: "I never said that." Daniela responded, "You just did." (Altman stated that his recollection was slightly different, claiming he only accused Amodei of engaging in "political behavior.") In 2020, Amodei, Daniela, and several colleagues left the company to found Anthropic, which has since become one of OpenAI's main competitors.

Meanwhile, Altman continued to emphasize OpenAI's commitment to safety, especially in the presence of potential recruits. At the end of 2022, four computer scientists published a paper proposing the risk of "deceptive alignment": highly advanced models might perform well during testing but pursue their own objectives after actual deployment. (This seemingly science-fiction scenario has already occurred under certain experimental conditions.) Several weeks after the paper was published, one of the authors—a Ph.D. student at the University of California, Berkeley—received an email from Altman. Altman expressed growing concerns about the threat of "unaligned AI" and contemplated investing $1 billion to address this issue, such as establishing a global research prize. Despite this student having heard rumors that "Sam was a bit slick," the commitment eventually convinced him to pause his studies and join OpenAI.

However, by multiple meetings in the spring of 2023, Altman's attitude seemed to shift. He no longer mentioned setting up an award but instead turned to establishing an internal "superalignment team" within the company. An official announcement stated that the team would receive "20% of the company's secured compute," a resource valued at potentially over $1 billion. The announcement also emphasized that if the alignment problem couldn't be solved, AGI might lead to "humanity being disempowered or even extinct." Jan Leike, who was in charge of the team, later stated, "It was indeed a very effective retention strategy."

However, the promise of "20% compute" was not fulfilled. Four individuals involved with or closely following the team stated that the actual allocated resources only accounted for 1% to 2% of the company's total compute. Additionally, a researcher pointed out that "most of the so-called superalignment compute actually runs on the oldest, worst-performing cluster." Team members generally believed that more advanced hardware was prioritized for revenue-generating projects. (OpenAI denied this.) Leike had raised this issue with the then Chief Technology Officer Mira Murati, but the response was that there was no need to push further—this commitment was "unrealistic" from the start.

Around this time, a former employee told us that Ilya Sutskever "began to heavily emphasize safety." In the early days of OpenAI, while he thought catastrophic risk was a reasonable concern, it was still somewhat distant; however, as he gradually came to believe AGI was approaching, this concern escalated rapidly. According to the employee's recollection, during an all-hands meeting, "Ilya stood up and said there would be a point in the next few years where almost everyone in the company would have to switch to doing safety, or else we're done." However, the following year, this "superalignment team" was disbanded before completing its mission.

At this point, internal communications indicate that executives and board members are starting to believe that Sam Altman's concealment and misleading behavior may have a material impact on the safety of OpenAI's products. During a meeting in December 2022, Altman assured the board that the upcoming GPT-4's multiple features had been approved by the safety committee. Board member and AI policy expert Helen Toner requested to see the relevant documents but discovered that the two most controversial features—one allowing users to "fine-tune" the model and another enabling its deployment as a personal assistant—had not actually been approved. After the meeting, another board member, entrepreneur Tasha McCauley, was pulled aside by an employee asking if she knew about "the compliance incident in India": Altman had never mentioned in multiple board updates that Microsoft had launched an early version of ChatGPT in India without completing necessary safety reviews. "This was almost entirely brushed under the rug," then OpenAI researcher Jacob Hilton said.

While these issues did not directly lead to a safety incident, researcher Carroll Wainwright believed they reflected a "continued slide toward a product-first, security-light" trend. Following the release of GPT-4, Jan Leike, who was in charge of security work, wrote to the board: "OpenAI is deviating from its mission. We prioritize product and revenue first, then capability, research, and scaling, with alignment and safety coming in third." He also noted that "companies like Google are learning lessons—accelerating deployment while neglecting security concerns."

In an email to the board members, McCauley wrote, "I believe we are indeed at a stage where increased oversight is necessary." However, when the board attempted to address this issue, they were clearly at a disadvantage. "To put it bluntly, it's a group of people lacking real-world experience," former board member Sue Yoon said. In 2023, the company was preparing to release GPT-4 Turbo. According to Sutskever's description in a memo, Altman had told Mira Murati that the model did not require security approval and attributed this decision to the company's general counsel, Jason Kwon. But when Murati asked Kwon on Slack, he replied, "Um... I'm not quite sure why Sam would think that." (OpenAI stated that this incident was "not significant.")

Shortly after, the board decided to dismiss Altman—subsequently, the world witnessed how he swiftly reversed that decision. OpenAI's charter is still available on the official website, but those familiar with the company's governance documents say its contents have been watered down to near meaninglessness. In June of last year, Altman wrote in a personal blog post about superintelligence: "We have crossed the event horizon; the takeoff has begun."

According to the original charter, this was supposed to be a turning point for the company to cease competition and transition to collaboration. However, in the article titled "The Gentle Singularity," he adopted a whole new tone, replacing "existential dread" with "optimistic imagination": "We will all have better things, and we will create increasingly better things for each other." He acknowledged that alignment issues remained unresolved but redefined them as a "nuisance," similar to being addicted to an Instagram recommendation algorithm.

Altman is often referred to with awe or skepticism as "the most powerful storyteller of his generation." The Steve Jobs he admired was once thought to possess a "reality distortion field," molding the world to his vision with absolute confidence. But even Jobs never told users: if they didn't buy his product, humanity might perish. In 2008, at just 23 years old, Altman was described by his mentor Paul Graham as follows: "Drop him on a cannibal island, come back in five years, and he'll be king." This assessment was not based on his achievements at the time but on his nearly unconstrained willpower.

However, to some of those who have worked most closely with him, this trait has another side. As Sutskever became increasingly anxious about AI safety, he compiled a series of memos about Altman and Greg Brockman—a set of documents so significant in Silicon Valley that they are even referred to as the "Ilya Memos."

Meanwhile, Dario Amodei continued to document. These materials did not provide so-called "smoking gun evidence" but depicted a series of seemingly scattered yet accumulating patterns of behavior: such as offering the same position to different people, giving conflicting accounts of public information, and being ambiguous about security processes. Sutskever's conclusion was that this behavior "does not build an environment conducive to safe AGI"; Amodei was more direct, writing, "The issue with OpenAI is Sam himself."

We interviewed over a hundred people familiar with Altman's approach: current and former OpenAI employees and directors, his colleagues and competitors, friends and foes—in Silicon Valley, many often wear multiple hats. Some defended his business acumen, believing Sutskever and Amodei were just failed competitors; others saw him as a naive, absent-minded scientist, or even as an extremist trapped in "doomsdayism." Yoon believed that Altman was not a "Machiavellian villain" but a person convinced by his own narrative, "he is so immersed in his self-belief that he makes decisions that are incomprehensible in the real world—but he does not live in the real world to begin with."

However, the judgment of most interviewees is similar to Sutskever and Amodei's: Altman has an extreme power will, standing out even among industrial titans who have their names engraved on rockets. "He is not bound by 'reality,'" a board member said, "He possesses two traits that rarely appear together: one is a strong desire to be liked, to please the other party in every interaction; the other is almost antisocial, lacking concern for the consequences of deceiving others."

More than one interviewee spontaneously used the term "antisocial personality." Altman, a peer of the first Y Combinator batch, was a colleague of programmer Aaron Swartz, who later committed suicide in 2013. Before his death, Swartz expressed concerns to a friend about Altman: "You must understand, Sam is never to be trusted. He is an antisocial personality; he is capable of anything." Several Microsoft executives also stated that despite Satya Nadella's long-standing support for Altman, the relationship between the two is becoming tense. "He will mislead, distort, renegotiate, or even overturn agreements," one executive said. Earlier this year, OpenAI reaffirmed Microsoft as the exclusive cloud services provider for its "stateless model," but on the same day, it announced a $500 billion partnership with Amazon, making the latter the exclusive reseller of its enterprise AI platform. While this arrangement did not violate the contract, Microsoft believed there was a potential conflict. (OpenAI stated that it would not breach the contract.) The executive even commented, "I believe there is a significant possibility that in the future he will be seen as someone similar to Bernie Madoff or Sam Bankman-Fried."

Altman is not a technical genius—in the eyes of many colleagues, his professional abilities in programming or machine learning are limited, and he may even confuse basic concepts. He built OpenAI largely by integrating other people's funds and technical resources. This is not uncommon—this is the role of an entrepreneur. What is more noteworthy is his ability to persuade engineers, investors, and the public with conflicting views, convincing them that his priorities are also theirs. When these people try to stop him, he is often able to defuse the situation with appropriate rhetoric—at least temporarily; and by the time the other party realizes the problem, he has usually already achieved his goal. "He will design some structures to constrain his future self on paper," Wainwright said, "but when the future truly arrives and needs to be constrained, he will dismantle these structures one by one."

"His persuasion is incredibly strong, like a Jedi mind trick," a tech executive who has worked with him said, "It's a whole other level." In AI alignment research, there is a classic concept: the will of humanity versus a powerful AI, with the latter almost inevitably prevailing, like a chess grandmaster against a child. And in the view of that executive, witnessing Altman navigate through the various parties during the "Blip" event was like watching "an AGI breaking free from its cage."

In the days following his dismissal, Sam Altman had been trying to prevent any investigation into the allegations against him from taking place. He had expressed to two people that he was concerned that even the existence of an investigation would make him look guilty. (Altman denies making this statement.) However, after resigning board members insisted that an "independent investigation must take place" as a condition of his departure, Altman eventually agreed to a "review" of the "recent events." According to sources familiar with the negotiations, the two new board members insisted on leading this review themselves.

Lawrence Summers, leveraging his connections in politics and on Wall Street, seemed to lend some credibility to this review. (Last November, Summers resigned from the board after it was publicly disclosed that he had sought advice from Jeffrey Epstein via email while pursuing a young protege.) OpenAI ultimately engaged the prominent law firm WilmerHale to handle this review. The firm has previously led internal investigations into Enron and WorldCom.

Six individuals close to the investigation process indicated that the review seemed designed to limit transparency. Some said that the investigators initially did not reach out to some key individuals within the company. An employee went so far as to contact Summers and Bret Taylor to voice their concerns. "They were only interested in that brief period when the board drama happened, not Sam's long-standing integrity issues," the employee recalled feeling during their interview with the investigators. Some were also hesitant to share their concerns about Altman due to a perceived lack of anonymity protection. "All signs indicate that they are looking for a predetermined conclusion—to exonerate him," the employee said. (However, some lawyers involved in the process defended the review, calling it "independent, thorough, comprehensive, and guided by the facts." Taylor also stated that the review was "thorough and independent.")

The role of an internal corporate investigation often serves to legitimize a decision. In privately held companies, investigation outcomes sometimes do not even result in a written report, serving as a way to mitigate legal liability. However, in cases involving public controversy, there is usually an expectation of a higher degree of transparency. In 2017, prior to Travis Kalanick's exit from Uber, the board had hired an external firm and released a 13-page summary of the investigation to the public. Considering OpenAI's 501(c)(3) non-profit status and the highly public nature of this termination, many executives within the company had initially anticipated a detailed investigation report. However, by March 2024, OpenAI only announced Altman's "clearance" without releasing any formal reports, acknowledging a "trust breakdown" in about 800 words on their website.

Even former colleagues are feeling the aftershocks. Mira Murati left OpenAI in 2024 to start her own AI startup. Subsequently, Altman's close ally Josh Kushner called her. He began by praising her leadership but then made a veiled threat, expressing his "concern" about her "reputation" and mentioning that some former colleagues now see her as an "enemy." (Kushner, through a spokesperson, said that this retelling "did not provide the full context"; Altman, on the other hand, claimed to be unaware of the call.)

Early in his tenure as CEO, Altman announced that OpenAI would create a "capped-profit" company held by the nonprofit entity. This convoluted almost twisted company structure was apparently of Altman's own devising. During the transition, a director named Holden Karnofsky voiced opposition, believing that this arrangement severely undervalued the nonprofit's worth. "I can't in good conscience get on board with this," Karnofsky said. According to records at the time, he cast a dissenting vote. However, after the board's lawyer suggested that his opposition "may serve as a red flag for further investigation into the legality of the new structure," his vote was ultimately recorded as an abstention and seemingly without his consent — potentially even constituting commercial record falsification. (OpenAI told us that multiple employees recall Karnofsky abstaining and provided meeting minutes as evidence.)

In October of last year, OpenAI underwent a "capital restructuring," becoming a for-profit entity. The company publicly stated that its affiliated nonprofit — now named the OpenAI Foundation — would become one of the wealthiest institutions in history. However, the foundation now holds only 26% of the company's shares, and all of its directors except one also serv