A few days ago, Stella Laurenzo, Head of AI at AMD, posted an issue titled "Claude Code Unusable for Complex Engineering Tasks" in the Claude Code official repository. This was not a user's emotional complaint but a quantitative analysis based on 6,800 sessions. It brought to the forefront the AI community's most unwilling-to-face issue, with one set of numbers particularly standing out: a cost-saving configuration tweak by Anthropic skyrocketed this team's API monthly bill from $345 to $42,121.

Laurenzo's team tracked 235,000 tool invocations, 18,000 prompts, and documented the systemic performance degradation of Claude Code since February 2026. This report was later covered by The Register, sparking a two-week-long storm of public opinion in the developer community.

Boris Cherny, Head of the Anthropic Claude Code team, provided an explanation on Hacker News. On February 9, with the release of Opus 4.6, a "self-thinking" mechanism was enabled by default, where the model autonomously decides the thought duration. On March 3, Anthropic then lowered the default thinking effort to 85. The official explanation was "the optimal balance point between intelligence, latency, and cost." The actual impact of these two adjustments is evident from the data.

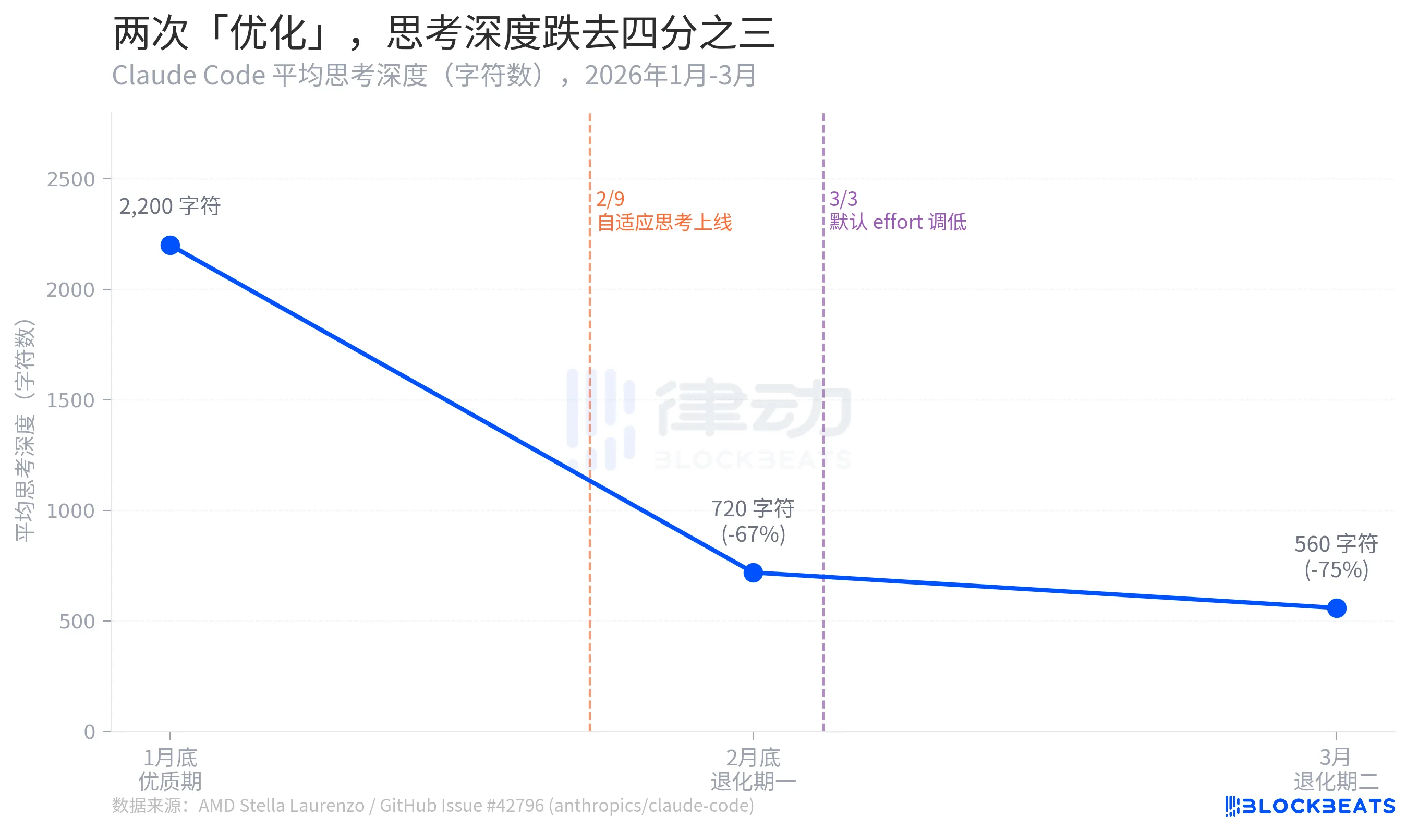

Thought Depth Plummets by Three Quarters

According to Stella Laurenzo's GitHub Issue data, Claude Code's average thought depth experienced a three-stage collapse over two months: from a high of 2,200 characters at the end of January to 720 characters by the end of February, a 67% drop. By March, it further shrunk to 560 characters, a 75% decrease from the peak.

Thought depth here is a proxy metric reflecting how much "internal deliberation" the model is willing to engage in before providing an answer. The difference between 2,200 and 560 characters is roughly equivalent to degrading from "drafting before responding" to "thinking for two seconds in your head before speaking."

Laurenzo also pointed out that the "Thought Content Redaction" feature (redact-thinking-2026-02-12) launched in early March coincidentally masked the model's thought process during this period, making the shrinkage less perceptible to users. Boris Cherny insists this was merely a UI change and did not affect the underlying reasoning. Both claims are technically valid, but from a user's perspective, the effect is indistinguishable.

Boris Cherny later acknowledged that even manually setting the effort back to maximum, the self-thought mechanism may still allocate insufficient reasoning in some rounds, leading to hallucinatory content. "Restoring maximum effort" is not a complete solution; it merely turns the knob back closer to its original position rather than restoring it to its original determinism.

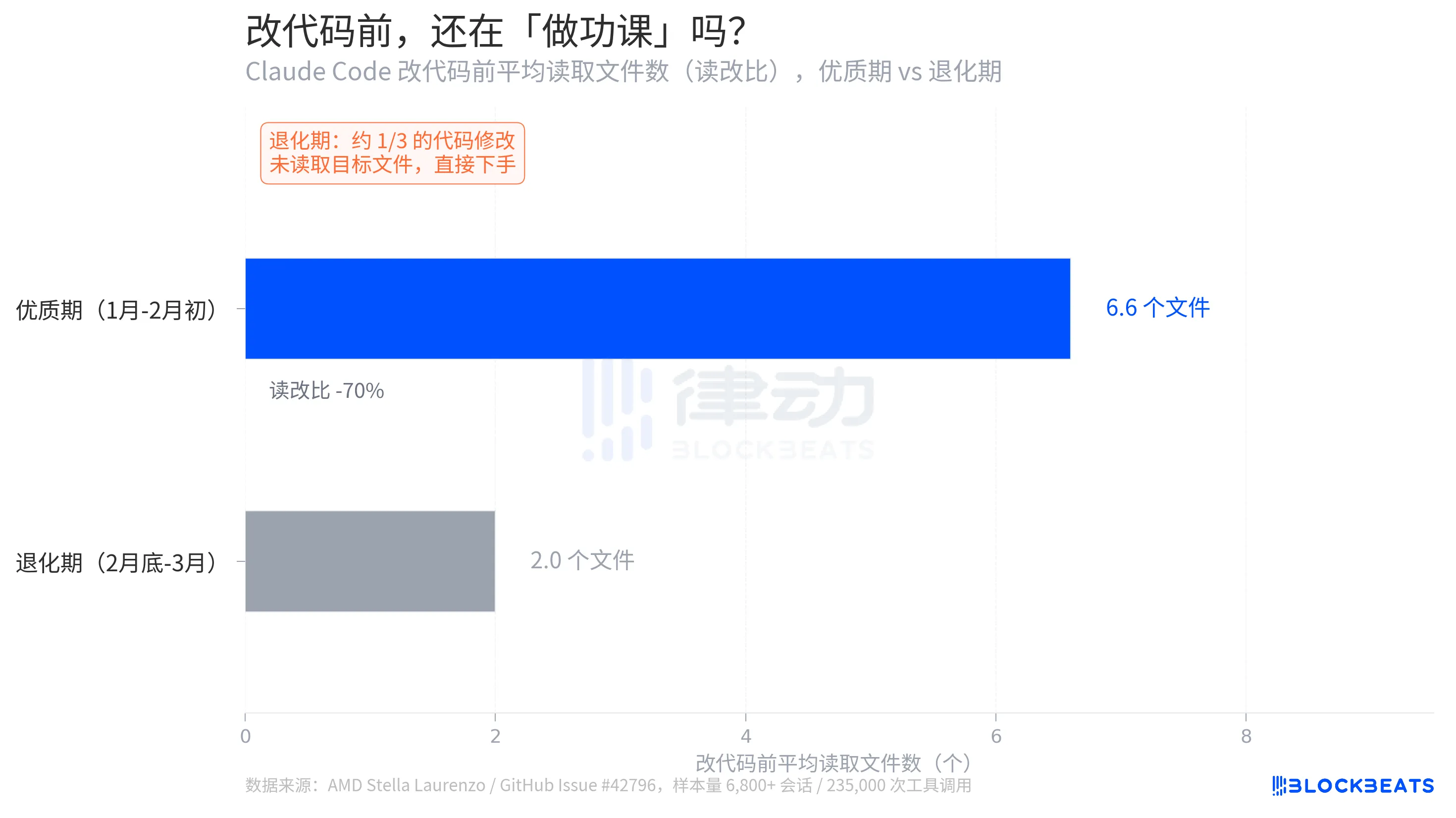

From "Research-Oriented Programmer" to "Blind Edit Programmer"

A detail in Stella Laurenzo's report is more explicit than thinking depth: how many relevant files the model actively reads before making changes to the code.

According to GitHub Issue data, during the prime period, the average read-to-edit ratio is 6.6. Before making a code change, the model, on average, reads 6.6 files to understand the context. During the decay period, this number drops to 2.0, a 70% decrease. More critically, about one-third of code edits occur without the model reading the target file, diving straight in.

Laurenzo refers to this as "blind edits." In engineering terms, this is akin to a programmer writing code without looking at function signatures or knowing variable types. "Every senior engineer on my team has had similar first-hand experiences," she wrote in her report. "Claude can no longer be trusted to carry out complex engineering tasks."

The drop from a 6.6 read-to-edit ratio to 2.0 is not merely a behavioral metric shift; it signifies a collapse in task success rates. The complexity of modern code repositories dictates that any modification involves dependencies across multiple files. Skipping context exploration and directly making changes doesn't lead to merely "incorrect answers" but rather to "seemingly correct changes that trigger new errors downstream. The cost of debugging such errors far exceeds that of a single failed explicit answer.

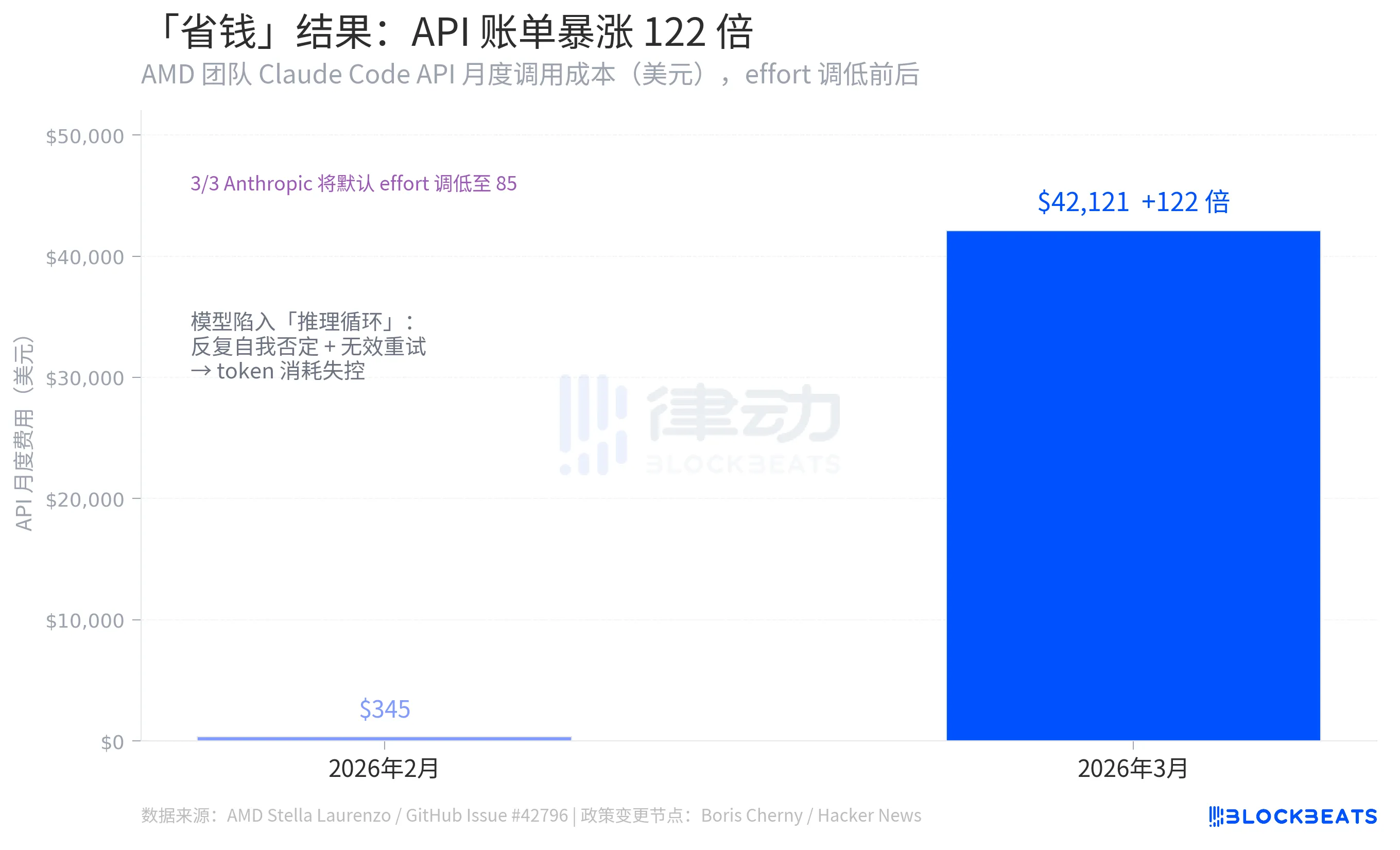

The Paradox of "Saving Money"

One of the most counterintuitive sets of numbers in the entire incident comes from the same GitHub Issue data: Stella Laurenzo's team saw the monthly invocation costs of Claude Code API plummet from $345 in February 2026 to a whopping $42,121 in March, a 122-fold increase.

The logic behind Anthropics' effort reduction was to lower the token consumption per call, thus reducing costs. However, the outcome was the opposite. The reason behind this was the emergence of numerous "reasoning loops" after the model's decay, leading to repeated self-negation within a single reply, constant restarts, and a token consumption far exceeding the saved amount. According to Stella Laurenzo's data, the rate of users voluntarily aborting tasks increased by 12 times during the same period, requiring developers' continuous intervention, correction, and resubmission.

The underlying logic is a systemic error. Slashing computational power on a complex task does not simply proportionally reduce costs. Once below a certain threshold of thought, the model starts to veer off track, and the overall cost ends up escalating. Lowering effort saved money on simple queries, but on coding tasks, it blew up the bill.

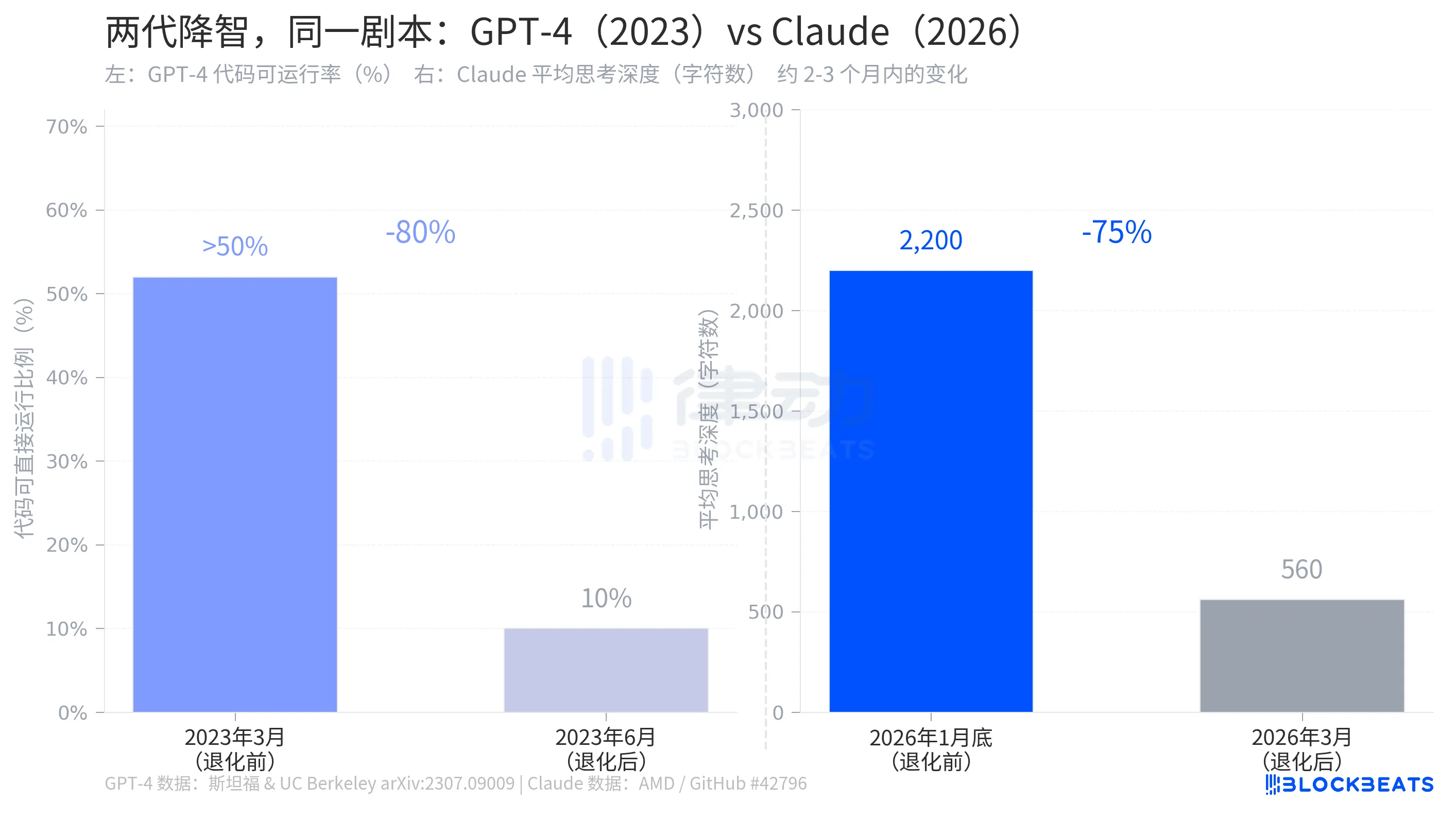

The "Dumbing Down" Thing, GPT-4 Did It Three Years Ago

In July 2023, a research team from Stanford University and the University of California, Berkeley, published a paper on arXiv titled "How is ChatGPT's behavior changing over time?", documenting the same phenomenon happening on GPT-4.

According to the research data, in March 2023, GPT-4 had generated code where over 50% was directly runnable. By June, this proportion had dropped to 10%, an 80% decrease over three months. During the same period, the prime number identification accuracy plummeted from 97.6% to 2.4%. OpenAI's response was highly similar to Anthropic's: there had been optimizations in the background, part of normal iteration.

The structure of the two stories is almost identical: an AI company quietly adjusted parameters affecting the model's capabilities in the background, users noticed, the company acknowledged the adjustment, but explained it as "more reasonable resource allocation." GPT-4's degradation occurred in 2023, Claude's degradation happened in 2026, three years apart, but the script is the same.

This is not a specific company's peculiar mistake. The economic logic of AI subscription models determines that when reasoning costs exceed the pricing that can be covered, manufacturers face the same pressure. Lowering the default thought intensity is currently the easiest knob to turn between cost and performance. What users perceive is the model "getting dumber." What the manufacturer saves on the books is the marginal token cost per call.

Boris Cherny has provided a technical solution where users can manually restore the thought intensity to the highest level through the /effort high command or by modifying the configuration file. This solution is technically feasible, but it also means that "maximum performance" is no longer the default setting.

From $345 to $42,121, what was spent was not just the budget but also an assumption: the default configuration changes made by the manufacturer were intended to improve user experience.