DeepTide Summary: Microsoft has finally hit a breaking point, transitioning GitHub Copilot from a monthly subscription model to a token-based billing. This is not a product upgrade, but the collective bankruptcy of the entire AI industry subsidy scheme—OpenAI, Anthropic, and others have used monthly fees to mask the true cost, causing users to burn $8-13 worth of computation power for every $1 spent, training a generation of usage habits that are fundamentally unsustainable. As prices return to reality, you may find that those "revolutionary" AI tools are nothing more than expensive toys.

I just wrote an article about how OpenAI is taking on Oracle, and this piece uses some of that material.

This is one of the best articles I have ever written, and I am extremely proud of it.

Subscribing to the paid version is both a great value and enables me to produce these large-scale, in-depth research articles every week.

Yesterday morning, GitHub Copilot users received the confirmation of the news I reported a week ago—all GitHub Copilot plans will switch to a consumption-based model on June 1, 2026.

Microsoft will no longer provide users with a set number of "request counts," but will instead charge based on the actual model cost that users use. Microsoft calls this "…an important step towards a sustainable, reliable Copilot business and overall user experience." Users now can use as much as they can afford in tokens based on their subscription fee (e.g., a $19 per month plan can use $19 worth of tokens).

Translation: We can no longer subsidize GitHub Copilot users, or else Amy Hood (Microsoft CFO) will start hitting people with a baseball bat.

The announcement itself is an interesting preview, showing how these price changes will be framed:

Copilot is no longer the product it was a year ago. It has evolved from an in-editor assistant to an intelligent agent platform capable of running long, multi-step coding sessions, iterating across the entire codebase using the latest models. Intelligent agent use is becoming the default mode, bringing significantly higher computational and inference requirements.

Now, a quick chat question and a several-hour solo coding session may cost the user the same amount. GitHub has been absorbing the rising inference costs behind this kind of usage, but the current request-based advanced model is no longer sustainable. Usage-based billing has addressed this issue. It better aligns pricing with actual usage, helps us maintain long-term service reliability, and reduces the need to throttle heavy users.

You see, it's not Microsoft subsidizing the compute of nearly two million people, it's that AI has become so powerful and complex that it's essentially a different product!

While Copilot may no longer be "last year's product," the underlying economic mismatch has hardly changed: Microsoft has been allowing users to burn through more tokens per month than the subscription fee for three years now. According to a report by The Wall Street Journal in October 2023:

Individual users pay $10 per month to use this AI assistant. Based on data revealed by a source familiar with the matter, in the first few months of this year, the company was losing an average of over $20 per user per month, with some users costing the company $80 per month.

Naturally, GitHub Copilot users are now in revolt, saying the product is "dead" and "completely ruined."

I predicted this day in "The Subprime AI Crisis" two years ago:

That day has finally arrived because every AI service you use is subsidizing compute, and every service is losing money as a result:

When you pay for the services of an AI startup — including OpenAI and Anthropic, of course — you pay a monthly fee, such as Anthropic's Claude at $20, $100, or $200 per month, Perplexity at $20 or $200 per month, or OpenAI at $8, $20, or $200 per month for a subscription.

In certain enterprise scenarios, you are allocated certain "credits" for specific workloads, like Lovable giving users "100 monthly credits" in a $25 monthly subscription and $25 in cloud hosting (until the end of Q1 2026), with credits rolling over month to month.

When you use these services, the respective companies either pay the AI lab per million tokens at a rate or (for Anthropic and OpenAI) pay the cloud provider to rent GPUs to run the model. A token is essentially 3/4 of a word.

As a user, you don't feel the token burn, only the input and output process. The AI lab uses "tokens," "messages," or a 5-hour rate limit with a percentage-based cost to mask the service cost. As a user, you don't really know how much all of this actually costs.

In the backend, AI startups are burning cash like crazy, and until recently, Anthropic allowed you to burn up to $8 worth of compute power for every $1 of subscription fee. OpenAI also lets you do this, although it's hard to quantify exactly how much.

AI startups and cloud behemoths thought they could attract enough people with subsidies and loss-leading products to make users addicted enough to refuse to switch when the enterprise raised prices. I guess they also thought that token costs would decrease over time, but what actually happened is — while prices for some models may have dropped, newer "inference" models burn more tokens, meaning that inference costs somehow increased over time.

Both assumptions were wrong because a monthly subscription model doesn't make sense for any service connected to a large language model.

The Fundamental Economic Model of Generative AI Has Collapsed

Think about it this way. When Uber (no, this is nothing like Uber) started raising ride prices, the underlying economic logic didn't change, and what was presented to passengers and drivers didn't change — users paid for a trip, drivers got paid for a trip.

Drivers still had to pay for gas, car insurance, any required local permits, and any financing costs for their vehicle, costs that Uber didn't subsidize. Uber's massive losses came from subsidies, endless marketing expenses, and doomed research efforts like self-driving cars.

Generative AI Subscriptions Are Nothing Like Uber

To illustrate the scale of AI mispricing, let me ask you to imagine a parallel history of Uber with a very different business model.

Generative AI subscriptions are like if Uber charged you $20 a month to take 100 rides within 100 miles, with gasoline priced at $150 per gallon, and Uber paid for the gas because someone insisted oil would one day become so cheap it would not be worth metering.

Uber would eventually decide to start charging users a monthly fee for ride privileges and then charge for the gasoline consumed by users. Suddenly users went from $20 a month for 100 rides to paying $20 for ride privileges plus $26 for a 10-mile trip's gasoline. Users would naturally be a little peeved.

While this may sound a bit exaggerated, it is actually a quite accurate metaphor for what is happening in the Generative AI industry, especially with GitHub Copilot.

GitHub Copilot's previous pricing allowed for 300 advanced requests per month, as well as "unlimited chat requests" using models like GPT-5 mini.

Each request (to quote Microsoft) is "...any interaction where you ask Copilot to do something for you," and in a request-based system, more expensive models will consume more requests, such as Claude Opus 4.6 taking up three advanced requests. When you run out of advanced requests, Copilot lets you use those cheaper models freely for the remainder of the month.

Things were not always like this, though. It wasn't until May 2025 that Microsoft even limited users to the models, and even then users were furious about any restrictions on the product.

Microsoft—like every AI company—has deceived customers with unsustainable services, as selling month-subscription-based LLM-driven services has never made any sense.

If you're curious how much token-based billing services might cost, a user in the GitHub Copilot sub-forum found that the token consumption for a single advanced request was about $11, as one "request" involved using 60,000 tokens in a context window, several tools, and a bunch of internal "turns" (what the model is doing) to generate the output.

There is also an inherent unreliability in these large language models that can lead to illusions. While it may be frustrating to have an advanced request get stuck in a loop and spit out half-baked code, the same failure is not as easily forgiven when you're paying for it yourself.

Users have also been trained to use the product in a completely different way than traditional token-based billing, with many probably unaware of how many "tokens" they are burning or how much a particular task requires, as it varies depending on the model used.

This is a far cry from Uber, and anyone trying to tell you otherwise is attempting to justify bad behavior. Uber may surge-price, but it doesn't have to dramatically change the underlying economic logic of the platform, nor do users have to entirely shift how they use the product because Uber suddenly starts charging per gallon.

Monthly AI subscriptions are all part of the AI subsidy scam, a deliberate attempt to divorce Generative AI from its actual cost

There has never been — and never will be — an economically viable way to provide LLM-driven services unless charged based on actual per-user token consumption. Yet, these companies have created products with illusory benefits and questionable ROI while deceiving these users.

This has been obvious for years.

From an economic perspective, monthly subscriptions only make sense when costs are relatively static. Gyms can sell memberships because they roughly know how much equipment wears out, the operating costs of classes, and how much electricity, staff, and water might cost during a particular period.

The cost for Google Workspace customers — at least pre-AI — was the cost of accessing or storing documents, along with the ongoing costs of Google Docs and other services. Digital storage costs are relatively low (and unlike LLM, Google Workspace does not have high computational demands), meaning a particularly heavy Google Drive user wouldn't eat into their monthly subscription profits.

However, these services intentionally obscure the number of tokens or how much a particular activity costs, meaning users don't really know what rate limiting means, and every sudden change in rate limiting leaves customers scrambling to figure out how much actual work they can do with the service.

This is an abusive, manipulative, and deceptive business practice, designed solely to allow Anthropic, OpenAI, and other AI companies to expand their user base, as most AI users entirely perceive their real or imagined benefits through the lens of being able to burn 8 to 13.5 dollars in tokens for every 1 dollar of subscription fee.

This intentional deception serves one purpose: to ensure that the vast majority of people never come into contact with the true cost of generative AI.

When The Atlantic writes a passionate piece about Claude Code being Anthropic's "ChatGPT Moment," it is based on a $20 monthly subscription, not the underlying token consumption cost that Anthropic incurs, which, in turn, leads the author to forgive the "small errors" the model might make or when it "gets stuck on more complex programming tasks."

If the author were to pay for her actual token consumption and each time it got stuck incurred a $15 token fee, I doubt she would be as forgiving of these failures.

But this is all part of the con.

It is extremely, extremely important that those writing about AI in the mainstream media do not actually understand how much these services cost, and any mainstream articles about services like ChatGPT or Claude Code are written by people who have almost no idea how much each individual task could cost the user.

Remember: Generative AI services are mostly experimental products, with functionalities unlike any other modern software or hardware. People cannot just walk up to ChatGPT or Claude and start demanding it to work.

I mean, you can, but if your prompt is off, or you don’t understand how it works, or you make a mistake in what you feed it, or if it just messes up, it will spit out something you don’t like, which means you need to prompt it again. LLM is inherently unpredictable.

You cannot guarantee that LLM will perform a certain action, or that it will present you with a reality-based result. You cannot determine how much a particular task—even one you’ve done many times in the past with LLM—might cost you, and you cannot determine when the model might go crazy and delete something, or simply not do something but claim it did.

If users were forced to pay the actual rate, I think many would immediately abandon the product, because if you’re blindly messing around exploring what LLM can do, it’s very easy to burn a $5 token.

Aside: In fact, you can burn a lot of money without ever getting the desired outcome, because LLM is not real AI at all! No one who truly understands its limitations can easily burn $30, $50, or even $100, trying to persuade LLM to do what it claims it can do.

There’s a term for this. Flattery. LLMs are often designed to affirm users, even when they’re talking dangerously crazy, which can extend to saying, "You want this technically or economically completely unfeasible thing?" Sure you can! That’s why the industry works so hard to obfuscate these costs—this is damn extortion!

I believe that most AI subscriptions transitioning to token-based billing is inevitable, especially as Anthropic and OpenAI have now done this for enterprise customers.

Can regular companies afford to transition to token-based billing? Anthropic estimates users spend $13-30 a day on Claude Code (over $7,000 a year), with large organizations spending hundreds of thousands or millions of dollars per year

As I discussed last week, Uber's CTO stated at a conference that the company had already spent its entire 2026 AI budget within a few months. He suggested that some companies could allocate up to 10% of employee salaries to an AI token, potentially increasing to 100% in the coming quarters.

This is a direct result of training every AI user to utilize these services as much as possible while masking their true costs. Every large company that mandates every employee to "use AI as much as possible" is essentially doing so while ignoring or completely disconnecting from the actual token consumption. As companies are forced to bear the actual cost, I'm not sure how you can economically justify any investment in this technology.

Of course, you might spout off about engineers "delivering code faster," and so on. But how much faster, exactly? How much money did you earn or save as a result? If you spend 10% of your labor costs on an AI token, has this additional expense been recouped from elsewhere?

I'm not sure. I'm not sure any enterprise that has invested heavily in a token has seen a return on investment, which is why every study on AI ROI fails to find much evidence.

In most cases, those who wax poetic about the various possibilities of generative AI are experiencing it without bearing the real costs.

Every Twitter user endlessly tweeting about their entire engineering team going crazy with Claude Code is likely using a Teams subscription costing around $125 per person per month, with usage limits similar to the $100 monthly fee for Anthropic's consumer-facing subscription. Every LinkedIn user who insists they complete hours of work in minutes using some Perplexity product is probably spending at most $200 a month on Perplexity's Max subscription.

In reality, that 10-person, $1,250 per month Teams subscription likely incurs monthly API call costs of $5,000 to $10,000 or even more.

Anthropic's Growth Lead, Amol Avasare, stated last week that their Max subscription is designed for heavy chat use, not the tasks people are performing with Claude Code and Cowork. He explicitly mentioned that Anthropic is now considering "different options to continue providing a premium experience," which essentially means "we will adjust pricing at some point."

I am not sure if people realize how expensive these tokens are, especially when it comes to large codebases and programming projects that involve frequent coding and infrastructure tooling. Can someone who budgets $200 a month afford $350, $400, or $500? Can they handle going over that amount in a single month? What if the budget is exceeded? Or what if they truly cannot afford the cost of completing the work?

Here's a more practical example: as of early April, Anthropic's own Claude Code developer documentation read, "[Users of Claude Code] have an average cost of $6 per developer per day, with 90% of users staying under $12 per day." As of this week, the documentation now states:

Claude Code charges based on API token consumption. For pricing of subscription plans (Pro, Max, Team, Enterprise), please see claude.com/pricing. The cost per developer varies widely depending on model selection, codebase size, and usage patterns (such as running multiple instances or automation). In enterprise deployments, the average cost is around $13 per developer active day, $150-250 per month, with 90% of users staying under $30 per active day.

To estimate your team's expenses, start with a small pilot group, establish a baseline using the tracking tools below, and then expand.

If we assume an average of 21 working days in a month, a Claude Code user's average cost is approximately $273 per month, or $3,276 per year. At $30 per working day, that's $630 per month, or $7,560 per year.

These numbers are staggering, and even more so if you are using any of Anthropic's newer models, you cannot possibly get by on just $30 a day. The cost of Claude Opus 4.7 is $5 per million input tokens and $25 per million output tokens. One million tokens is roughly equivalent to 50,000 lines of code. Assuming you're using a so-called state-of-the-art model, you're definitely going to at least hit one million tokens, and if you're unsure which model to use for a specific task, this number can quickly escalate.

Let's play around with that $30 figure again.

For a 10-person development team, that's $75,600 in a year, and we're only counting working days.

If you increase by $50 on average per workday over three months, you'll reach $88,200.

If you add over $100 for one month, you'll end up spending $102,900 in a year.

If you spend $300 per day, a team of 10 will spend $756,000 in a year.

While this might be feasible in cash-rich startups or in the rainy-day fund thinking of a Banana Republic like Meta, any truly cost-conscious business will find it hard to justify spending an additional five or six figures on a "productivity-enhancing" service, the efficacy of which seems immeasurable.

Now, I think most companies fall into three categories:

Enterprise deployments in large organizations like Spotify or Uber, with AI-obsessed CEOs who allow the budget to run rampant. I'd also say cash-rich large startups fall into this category.

Small startups with subsidized "Teams" subscriptions.

Individual users paying a monthly fee to access Claude or other AI subscriptions.

Large organizations can still claim they're burning millions in AI tokens for software engineers, based on the dubious benefit that the "best engineers" write no code.

All it takes is one bad earnings call to change this narrative. At a certain point, investors—even those who have been hyping up the AI bubble—will also start to question the escalating R&D costs (AI token consumption is often hidden here) when the company's revenue growth doesn't keep up.

This is likely to lead to more layoffs to control costs, similar to what Meta did, and ultimately a retrenchment when someone asks, "Did all this really help us work faster and better?"

Moreover, I believe that within six months, it will be tough for startups burning 10% or more of their human costs on AI tokens to convince investors that it's necessary.

Once everyone moves to token-based billing, I'm not sure we'll see as much hype around generative AI.

The Economics of AI Data Centers and Computing Power Are Unrealistic

People are talking about AI data centers in a way that is completely divorced from reality, I think people don't realize how absurd the whole era has become.

AI Data Centers Have High Construction and Operating Costs, Yet Generate Very Little Revenue

According to TD Cowen's Jerome Darling, the key IT (GPU and related hardware) costs around $30 million, with a data center capacity cost of $14 million per megawatt. Data centers seem to require one to three years of time, assuming there is a power supply.

By the end of 2028, of the purported 114GW of data centers to be built, only 15.2GW are under construction in any way, shape, or form. And "under construction" may only mean there is "a hole in the ground." It does not mean—and should not imply—that the facility’s capacity will be readily available anytime soon.

Let's start with something simple: every time you think of "100MW," think of "$4.4 billion," with a significant portion going towards NVIDIA GPUs.

Therefore, each AI data center is initially losing millions of dollars, even with a six-year depreciation schedule, it will take many years to break even... and with NVIDIA's annual upgrade cycle, once you complete the first customer contract, those GPUs are unlikely to make as much money.

It is also unclear whether the customer base for AI compute exists outside of OpenAI and Anthropic, both of which account for 50% of the AI data centers being built. If either of them cannot pay, it would create a significant systemic weakness.

In any case, it is also unclear what kind of ongoing fee structure these data centers are charging. While spot prices may be around $4.50/hour/B200 GPU, long-term contract prices are usually much lower, with one founder (according to The Information) saying they pay about $3.70 per GPU per hour for a one-year commitment.

It needs to be clear that we must distinguish between spot costs—the cost of randomly launching a GPU on someone else's server—and contracted compute, which makes up the bulk of the data center's capital expenditure. Most data centers are built with the intention of having one or two large customers, meaning these customers may negotiate more favorable blended rates.

Therefore, the charges for many data centers are far below $3.70 per hour, as they are billed based on a per megawatt (or kilowatt) price.

This is where the economics of economics begin to collapse.

The Economics of a 100MW Data Center — $2.55 per Hour, 16% EBITDA Margins at 100% Utilization, Unprofitable Due to Debt

This is the starting cost for a 100-megawatt data center. A 100MW data center might have only 85MW of actual chargeable capacity, according to discussions with a source familiar with hyperscale billing, with an estimated revenue of around $12.5 million per megawatt or annual revenue of around $1.063 billion.

Now, I should note that most data center companies you know of don't actually build them; they leave that to companies like Applied Digital, often referred to as "hosting partners." For instance, CoreWeave pays a hosting fee to Applied Digital to use its data center in North Dakota. CoreWeave is responsible for all GPUs and other tech in the data center.

To explain the economic mismatch, I'll be using a theoretical example of a data center leased to a theoretical AI compute company.

The data center's GPUs are likely NVIDIA's Blackwell chips. More likely, it uses pods of 8 B200 GPUs, retailing for about $450,000 each or $56,250 per GPU. Based on 85MW of critical IT load, the total capital expenditure per megawatt is around 36.78, or a total IT capex of about $3.126 billion, or around $2.67 billion in GPUs.

Assuming this data center is in Ellendale, North Dakota, that means an industrial power rate of around 6.31 cents per kWh, resulting in an annual power bill of about $54.4 million. Based on discussions with a source, I estimate ongoing costs like maintenance, personnel, power replacement, etc., to be around 12% of revenue, or about $128 million annually, bringing us to a cost of $1.834 billion.

Hold on. You also need to pay a hosting fee for the critical IT load. According to Brightlio, this fee is typically around $180-$200 per kW per month, depending on the scale and location of the deployment, although I've read as low as $130, which I'll go with, or about $133 million annually. This brings us to $3.164 billion.

Well, that's still less than $10.6 billion, so we're good, right?

Wrong! You have $3.126 billion worth of IT equipment to depreciate, with an annual depreciation of approximately $521 million over six years. That's $837.4 million per year, leaving you with around $168.6 million in annual profit, or about a 16.7% gross margin...

...if you maintain a 100% occupancy rate! You see, the data center may need one to two months to install these GPUs and onboard customers. During this period, your revenue is zero, but the losses are much greater because you continue to pay for hosting, power, and operational costs, even though at much lower rates (I've modeled for 10% power and 15% hosting/operational costs), meaning you're losing approximately $3.27 million per day.

For the sake of this example, let's assume you need an additional month to get this up and running, which means you've already incurred about $102 million that you can't recover, bringing our total annual cost, including depreciation, to $9.394 billion, or a 6.6% gross margin.

Wait, hold on, you didn't finance these GPUs with debt, did you? You did? How bad is it? Oh my—You've got an asset-backed loan for six years, with a loan-to-value ratio of 80%, meaning you borrowed $2.8 billion at a 6% interest rate.

Out of eternal generosity, your bank has granted you a deal—a 12-month grace period where you only pay interest... about $168 million, bringing our total cost for the first year (excluding the delayed month for fairness) to approximately $10.05 billion... with revenue of $10.6 billion.

That's a 5.19% gross margin, and you haven't even started repaying the principal yet. When that kicks in, you'll have to repay $54.1 million per month, totaling about $649 million per year for the next five years, approximately $1.48 billion, or a negative 40% gross margin.

I must stress, this is if you have 100% utilization and tenants pay on time every time.

Stargate Abilene is a disaster—$2.94 per GPU per hour, $10 billion in annual revenue, years behind schedule, with only one tenant losing billions every year

Let's talk about what should have been the most economically viable single project in data center history—an expansive campus built by Oracle for the world's largest AI company, a decades-old near-giga-scale enterprise that has a history of selling expensive databases and business management software to enterprises and governments.

Haha, I was definitely joking – this place is just a darn nightmare.

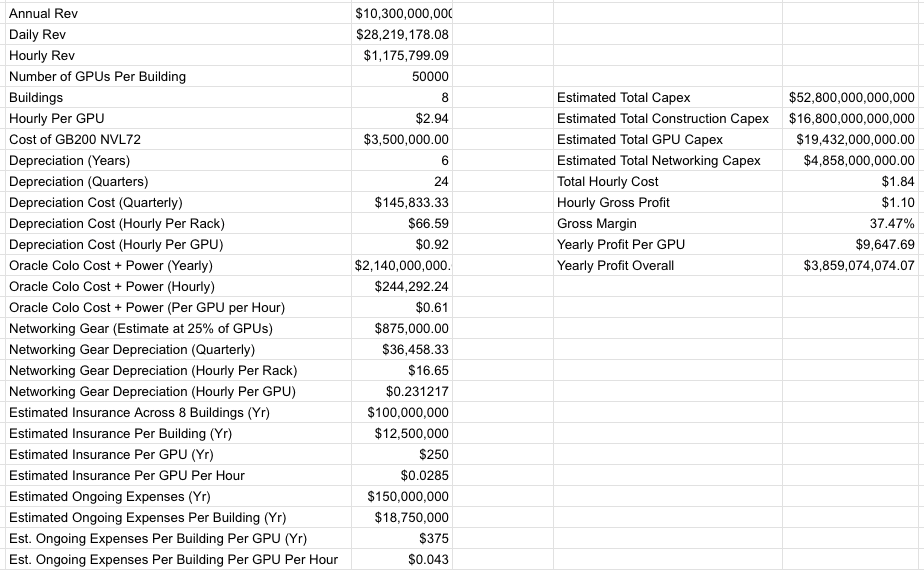

Stargate Abilene, an eight-building, 1.2GW data center campus with approximately 824MW of critical IT, was first announced in July 2024. As of April 27, 2026, only two buildings are operational and generating revenue, with the third building having minimal IT equipment. I estimate the total cost of Stargate Abilene to be around $52.8 billion.

Based on my own reporting, Oracle expects to generate approximately $10 billion in annual revenue from Stargate Abilene, and I estimate a total revenue of around $75 billion from the 7.1GW data center capacity it is building for one client: OpenAI. As I have also reported, Oracle estimated in 2024 that Abilene would need to pay a minimum of $2.14 billion annually for hosting and electricity costs to the land developer, Crusoe.

I should also note that it appears Oracle is bearing all of Abilene's construction costs.

Based on my calculations and reporting, I estimate that once Abilene is fully operational, the rough gross margin will be around 37.47%:

I must emphasize that a 37.47% gross margin may be too high as I do not have exact figures for Oracle's real insurance or personnel costs, only estimates based on documents viewed by this publication.

I should further clarify that Oracle is staking its entire darn future on projects like Stargate Abilene, incurring costs in the tens of billions upfront, with this business taking years to be profitable even if OpenAI pays on time.

Unfortunately, I cannot determine how much of Abilene is funded by debt. I only know that Oracle raised approximately $18 billion in September 2025 through issuing bonds of various maturities ranging from 7 to 40 years and reported a negative cash flow of $24.7 billion in the most recent quarter.

What I do know for sure is that Oracle has signed a 15-year lease with developer Crusoe and Oracle's future heavily relies on OpenAI's ability to make ongoing payments, which in turn depends on Oracle's completion of the Stargate Abilene project.

I also need to make it clear that the annual profit of $3.85 billion is only achievable if OpenAI makes payment on time, rapidly onboards Abilene, and everything goes according to plan.

If OpenAI fails to raise $852 billion in revenue, financing, and debt over the next 4 years, the Stargate Data Center project will bring Oracle down

Unfortunately, the exact opposite has occurred:

According to DatacenterDynamics, the initial 200MW of power was supposed to come online in "2025." As time passed, the onboarding, scheduled for the first half of 2025 with the potential to reach 1GW in 2025, would complete the full 1.2GW capacity by mid-2026, be energized by mid-2026, and have 64,000 GPUs deployed by the end of 2026. As of September 30, 2025, "two buildings are live."

As of December 12, 2025, Oracle Co-CEO Clay Magouyrk stated "Abilene is on track, having delivered over 96,000 NVIDIA Grace Blackwell GB200s, which is the number of GPUs for two buildings."

Four months later, on April 22, 2026, Oracle tweeted "…at Abilene, the 200MW is operational, and the delivery of the eight-building campus remains on track." It's currently unclear whether this is the critical IT capacity of 200MW or the total available power for the Abilene campus. However, it is only enough to support two buildings, meaning Oracle is far from "on track."

This is a significant issue. OpenAI can only pay for actual existent compute, and only the 206MW of critical IT capacity is really generating revenue, with the third building taking at least another month (if not a quarter) to come online.

However, the entire Stargate Data Center project has an even larger, more fundamental issue—none of this makes sense unless OpenAI achieves its absurd, cartoonish predictions.

As I discussed on Friday:

Let me reiterate these numbers: The 7.1GW Stargate data center under construction will generate approximately $75 billion in annual revenue once completed, with a total cost exceeding $340 billion. Oracle has a negative free cash flow of $24.7 billion, and its other business lines are stagnating, making its low-margin to negative-margin cloud business the sole growth engine.

In order to truly afford its compute contracts — including those with Amazon, Microsoft, CoreWeave, Google, Cerberas, and Oracle — OpenAI must raise or earn

Welcome to join the official BlockBeats community:

Telegram Subscription Group: https://t.me/theblockbeats

Telegram Discussion Group: https://t.me/BlockBeats_App

Official Twitter Account: https://twitter.com/BlockBeatsAsia